diff options

| author | Tasos Katsoulas <12612986+tkatsoulas@users.noreply.github.com> | 2023-02-02 15:23:54 +0200 |

|---|---|---|

| committer | GitHub <noreply@github.com> | 2023-02-02 15:23:54 +0200 |

| commit | 9f1403de7d3ea2633768d34095afcf880c7c4e2d (patch) | |

| tree | 0c50a1f42b3e182f6cd5de4e92c609cc76fd3cb5 /docs/guides/monitor | |

| parent | caf18920aac38eed782647957e82c0ab7f64ec17 (diff) | |

Covert our documentation links to GH absolute links (#14344)

Signed-off-by: Tasos Katsoulas <tasos@netdata.cloud>

Diffstat (limited to 'docs/guides/monitor')

| -rw-r--r-- | docs/guides/monitor/anomaly-detection-python.md | 36 | ||||

| -rw-r--r-- | docs/guides/monitor/anomaly-detection.md | 16 | ||||

| -rw-r--r-- | docs/guides/monitor/dimension-templates.md | 37 | ||||

| -rw-r--r-- | docs/guides/monitor/kubernetes-k8s-netdata.md | 28 | ||||

| -rw-r--r-- | docs/guides/monitor/lamp-stack.md | 42 | ||||

| -rw-r--r-- | docs/guides/monitor/pi-hole-raspberry-pi.md | 26 | ||||

| -rw-r--r-- | docs/guides/monitor/process.md | 231 | ||||

| -rw-r--r-- | docs/guides/monitor/raspberry-pi-anomaly-detection.md | 22 | ||||

| -rw-r--r-- | docs/guides/monitor/statsd.md | 14 | ||||

| -rw-r--r-- | docs/guides/monitor/stop-notifications-alarms.md | 12 | ||||

| -rw-r--r-- | docs/guides/monitor/visualize-monitor-anomalies.md | 28 |

11 files changed, 254 insertions, 238 deletions

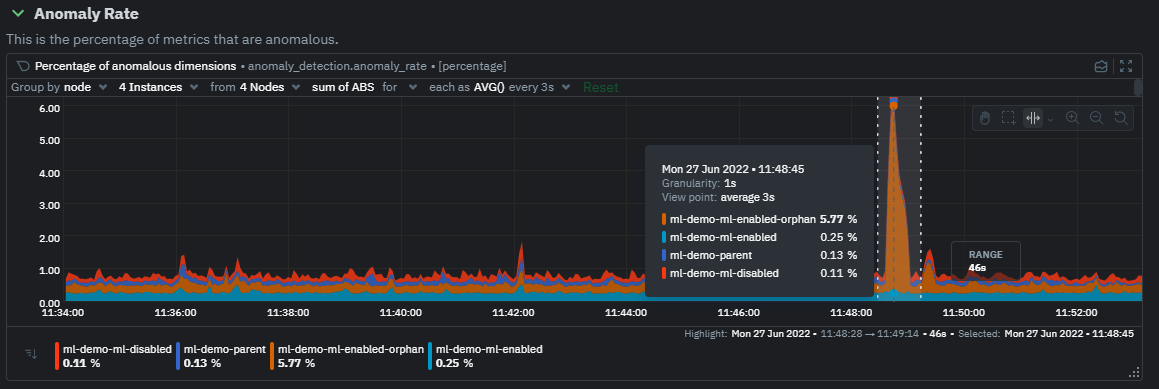

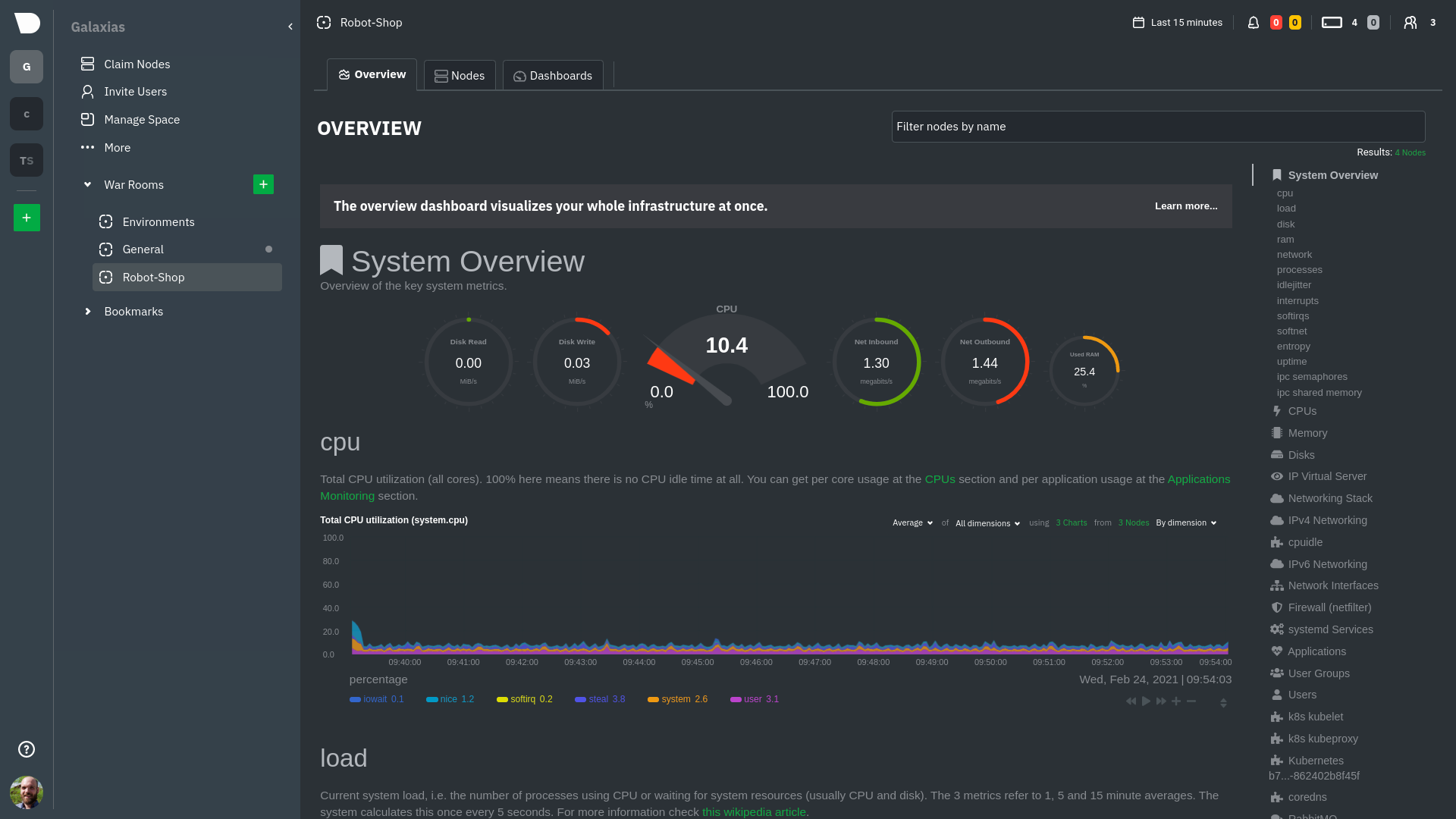

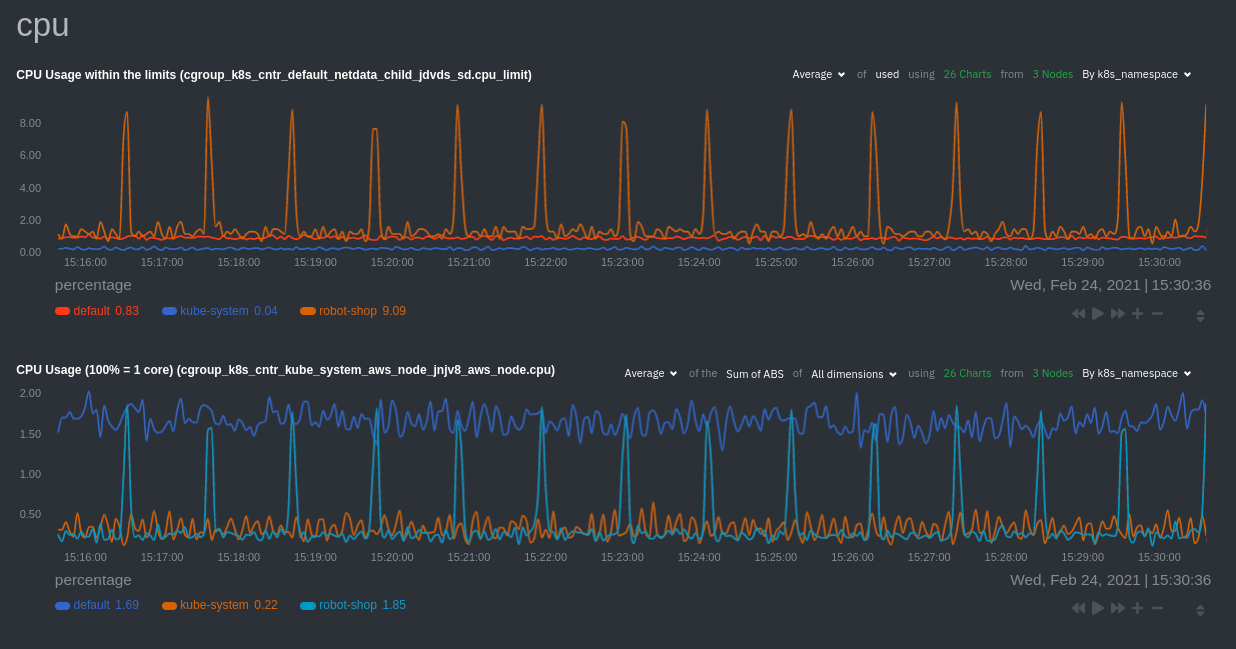

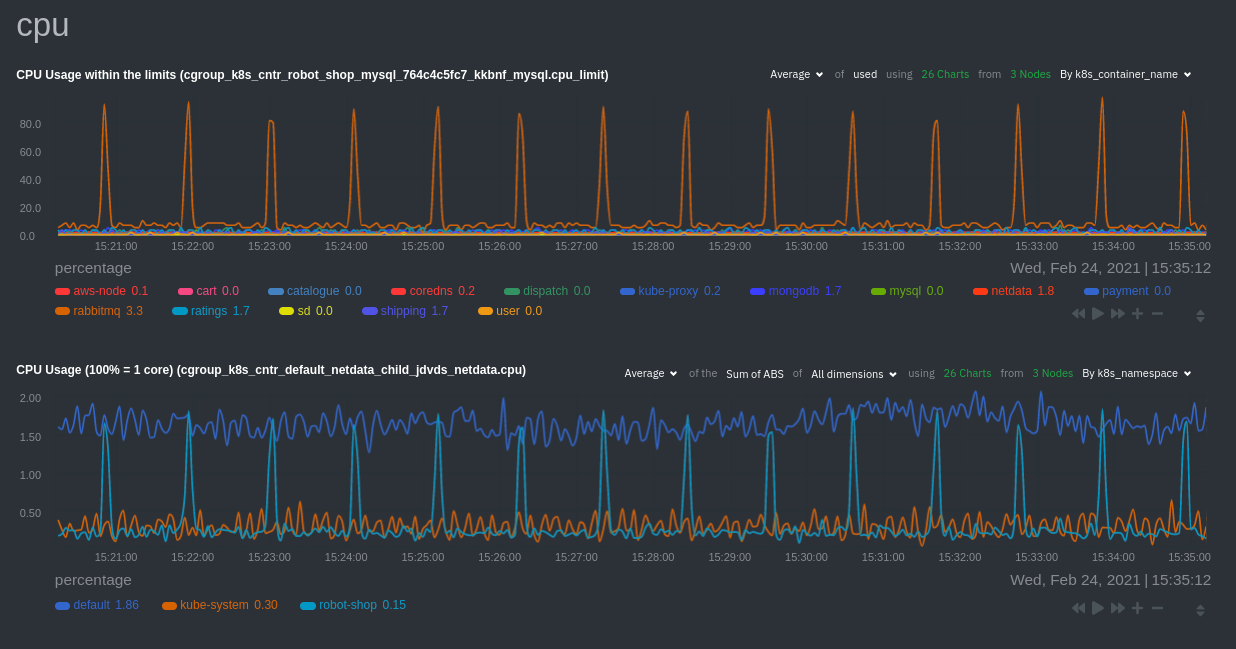

diff --git a/docs/guides/monitor/anomaly-detection-python.md b/docs/guides/monitor/anomaly-detection-python.md index ad8398cc68..d6d27f4e57 100644 --- a/docs/guides/monitor/anomaly-detection-python.md +++ b/docs/guides/monitor/anomaly-detection-python.md @@ -23,7 +23,7 @@ library](https://github.com/yzhao062/pyod/tree/master), which periodically runs quantify how anomalous certain charts are. All these metrics and alarms are available for centralized monitoring in [Netdata Cloud](https://app.netdata.cloud). If -you choose to sign up for Netdata Cloud and [connect your nodes](/claim/README.md), you will have the ability to run +you choose to sign up for Netdata Cloud and [connect your nodes](https://github.com/netdata/netdata/blob/master/claim/README.md), you will have the ability to run tailored anomaly detection on every node in your infrastructure, regardless of its purpose or workload. In this guide, you'll learn how to set up the anomalies collector to instantly detect anomalies in an Nginx web server @@ -35,9 +35,9 @@ server](https://user-images.githubusercontent.com/1153921/103586700-da5b0a00-4ea ## Prerequisites -- A node running the Netdata Agent. If you don't yet have that, [get Netdata](/docs/get-started.mdx). +- A node running the Netdata Agent. If you don't yet have that, [get Netdata](https://github.com/netdata/netdata/blob/master/docs/get-started.mdx). - A Netdata Cloud account. [Sign up](https://app.netdata.cloud) if you don't have one already. -- Familiarity with configuring the Netdata Agent with [`edit-config`](/docs/configure/nodes.md). +- Familiarity with configuring the Netdata Agent with [`edit-config`](https://github.com/netdata/netdata/blob/master/docs/configure/nodes.md). - _Optional_: An Nginx web server running on the same node to follow the example configuration steps. ## Install required Python packages @@ -65,7 +65,7 @@ Use `exit` to become your normal user again. ## Enable the anomalies collector -Navigate to your [Netdata config directory](/docs/configure/nodes.md#the-netdata-config-directory) and use `edit-config` +Navigate to your [Netdata config directory](https://github.com/netdata/netdata/blob/master/docs/configure/nodes.md#the-netdata-config-directory) and use `edit-config` to open the `python.d.conf` file. ```bash @@ -79,8 +79,8 @@ yourself if it doesn't already exist. Either way, the final result should look l anomalies: yes ``` -[Restart the Agent](/docs/configure/start-stop-restart.md) with `sudo systemctl restart netdata`, or the [appropriate -method](/docs/configure/start-stop-restart.md) for your system, to start up the anomalies collector. By default, the +[Restart the Agent](https://github.com/netdata/netdata/blob/master/docs/configure/start-stop-restart.md) with `sudo systemctl restart netdata`, or the [appropriate +method](https://github.com/netdata/netdata/blob/master/docs/configure/start-stop-restart.md) for your system, to start up the anomalies collector. By default, the model training process runs every 30 minutes, and uses the previous 4 hours of metrics to establish a baseline for health and performance across the default included charts. @@ -105,7 +105,7 @@ involve tweaking the behavior of the ML training itself. - `train_every_n`: How often to train the ML models. - `train_n_secs`: The number of historical observations to train each model on. The default is 4 hours, but if your node doesn't have historical metrics going back that far, consider [changing the metrics retention - policy](/docs/store/change-metrics-storage.md) or reducing this window. + policy](https://github.com/netdata/netdata/blob/master/docs/store/change-metrics-storage.md) or reducing this window. - `custom_models`: A way to define custom models that you want anomaly probabilities for, including multi-node or streaming setups. @@ -119,8 +119,8 @@ involve tweaking the behavior of the ML training itself. As mentioned above, this guide uses an Nginx web server to demonstrate how the anomalies collector works. You must configure the collector to monitor charts from the -[Nginx](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/nginx) and [web -log](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/weblog) collectors. +[Nginx](https://github.com/netdata/go.d.plugin/blob/master/modules/nginx/README.md) and [web +log](https://github.com/netdata/go.d.plugin/blob/master/modules/weblog/README.md) collectors. `charts_regex` allows for some basic regex, such as wildcards (`*`) to match all contexts with a certain pattern. For example, `system\..*` matches with any chart with a context that begins with `system.`, and ends in any number of other @@ -163,27 +163,27 @@ volume of requests/responses, not, for example, which type of 4xx response a use dimensions](https://user-images.githubusercontent.com/1153921/102820642-d69f9180-4392-11eb-91c5-d3d166d40105.png) Apply the ideas behind the collector's regex and exclude settings to any other -[system](/docs/collect/system-metrics.md), [container](/docs/collect/container-metrics.md), or -[application](/docs/collect/application-metrics.md) metrics you want to detect anomalies for. +[system](https://github.com/netdata/netdata/blob/master/docs/collect/system-metrics.md), [container](https://github.com/netdata/netdata/blob/master/docs/collect/container-metrics.md), or +[application](https://github.com/netdata/netdata/blob/master/docs/collect/application-metrics.md) metrics you want to detect anomalies for. ## What's next? Now that you know how to set up unsupervised anomaly detection in the Netdata Agent, using an Nginx web server as an example, it's time to apply that knowledge to other mission-critical parts of your infrastructure. If you're not sure -what to monitor next, check out our list of [collectors](/collectors/COLLECTORS.md) to see what kind of metrics Netdata +what to monitor next, check out our list of [collectors](https://github.com/netdata/netdata/blob/master/collectors/COLLECTORS.md) to see what kind of metrics Netdata can collect from your systems, containers, and applications. -Keep on moving to [part 2](/docs/guides/monitor/visualize-monitor-anomalies.md), which covers the charts and alarms +Keep on moving to [part 2](https://github.com/netdata/netdata/blob/master/docs/guides/monitor/visualize-monitor-anomalies.md), which covers the charts and alarms Netdata creates for unsupervised anomaly detection. For a different troubleshooting experience, try out the [Metric -Correlations](https://learn.netdata.cloud/docs/cloud/insights/metric-correlations) feature in Netdata Cloud. Metric +Correlations](https://github.com/netdata/netdata/blob/master/docs/cloud/insights/metric-correlations.md) feature in Netdata Cloud. Metric Correlations helps you perform faster root cause analysis by narrowing a dashboard to only the charts most likely to be related to an anomaly. ### Related reference documentation -- [Netdata Agent · Anomalies collector](/collectors/python.d.plugin/anomalies/README.md) -- [Netdata Agent · Nginx collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/nginx) -- [Netdata Agent · web log collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/weblog) -- [Netdata Cloud · Metric Correlations](https://learn.netdata.cloud/docs/cloud/insights/metric-correlations) +- [Netdata Agent · Anomalies collector](https://github.com/netdata/netdata/blob/master/collectors/python.d.plugin/anomalies/README.md) +- [Netdata Agent · Nginx collector](https://github.com/netdata/go.d.plugin/blob/master/modules/nginx/README.md) +- [Netdata Agent · web log collector](https://github.com/netdata/go.d.plugin/blob/master/modules/weblog/README.md) +- [Netdata Cloud · Metric Correlations](https://github.com/netdata/netdata/blob/master/docs/cloud/insights/metric-correlations.md) diff --git a/docs/guides/monitor/anomaly-detection.md b/docs/guides/monitor/anomaly-detection.md index 7f7727f53c..ce819d937a 100644 --- a/docs/guides/monitor/anomaly-detection.md +++ b/docs/guides/monitor/anomaly-detection.md @@ -14,7 +14,7 @@ custom_edit_url: https://github.com/netdata/netdata/edit/master/docs/guides/moni As of [`v1.32.0`](https://github.com/netdata/netdata/releases/tag/v1.32.0), Netdata comes with some ML powered [anomaly detection](https://en.wikipedia.org/wiki/Anomaly_detection) capabilities built into it and available to use out of the box, with zero configuration required (ML was enabled by default in `v1.35.0-29-nightly` in [this PR](https://github.com/netdata/netdata/pull/13158), previously it required a one line config change). -This means that in addition to collecting raw value metrics, the Netdata agent will also produce an [`anomaly-bit`](https://learn.netdata.cloud/docs/agent/ml#anomaly-bit---100--anomalous-0--normal) every second which will be `100` when recent raw metric values are considered anomalous by Netdata and `0` when they look normal. Once we aggregate beyond one second intervals this aggregated `anomaly-bit` becomes an ["anomaly rate"](https://learn.netdata.cloud/docs/agent/ml#anomaly-rate---averageanomaly-bit). +This means that in addition to collecting raw value metrics, the Netdata agent will also produce an [`anomaly-bit`](https://github.com/netdata/netdata/blob/master/ml/README.md#anomaly-bit---100--anomalous-0--normal) every second which will be `100` when recent raw metric values are considered anomalous by Netdata and `0` when they look normal. Once we aggregate beyond one second intervals this aggregated `anomaly-bit` becomes an ["anomaly rate"](https://github.com/netdata/netdata/blob/master/ml/README.md#anomaly-rate---averageanomaly-bit). To be as concrete as possible, the below api call shows how to access the raw anomaly bit of the `system.cpu` chart from the [london.my-netdata.io](https://london.my-netdata.io) Netdata demo server. Passing `options=anomaly-bit` returns the anomaly bit instead of the raw metric value. @@ -22,19 +22,19 @@ To be as concrete as possible, the below api call shows how to access the raw an https://london.my-netdata.io/api/v1/data?chart=system.cpu&options=anomaly-bit ``` -If we aggregate the above to just 1 point by adding `points=1` we get an "[Anomaly Rate](https://learn.netdata.cloud/docs/agent/ml#anomaly-rate---averageanomaly-bit)": +If we aggregate the above to just 1 point by adding `points=1` we get an "[Anomaly Rate](https://github.com/netdata/netdata/blob/master/ml/README.md#anomaly-rate---averageanomaly-bit)": ``` https://london.my-netdata.io/api/v1/data?chart=system.cpu&options=anomaly-bit&points=1 ``` -The fundamentals of Netdata's anomaly detection approach and implementation are covered in lots more detail in the [agent ML documentation](https://learn.netdata.cloud/docs/agent/ml). +The fundamentals of Netdata's anomaly detection approach and implementation are covered in lots more detail in the [agent ML documentation](https://github.com/netdata/netdata/blob/master/ml/README.md). This guide will explain how to get started using these ML based anomaly detection capabilities within Netdata. ## Anomaly Advisor -The [Anomaly Advisor](https://learn.netdata.cloud/docs/cloud/insights/anomaly-advisor) is the flagship anomaly detection feature within Netdata. In the "Anomalies" tab of Netdata you will see an overall "Anomaly Rate" chart that aggregates node level anomaly rate for all nodes in a space. The aim of this chart is to make it easy to quickly spot periods of time where the overall "[node anomaly rate](https://learn.netdata.cloud/docs/agent/ml#node-anomaly-rate)" is elevated in some unusual way and for what node or nodes this relates to. +The [Anomaly Advisor](https://github.com/netdata/netdata/blob/master/docs/cloud/insights/anomaly-advisor.mdx) is the flagship anomaly detection feature within Netdata. In the "Anomalies" tab of Netdata you will see an overall "Anomaly Rate" chart that aggregates node level anomaly rate for all nodes in a space. The aim of this chart is to make it easy to quickly spot periods of time where the overall "[node anomaly rate](https://github.com/netdata/netdata/blob/master/ml/README.md#node-anomaly-rate)" is elevated in some unusual way and for what node or nodes this relates to.  @@ -44,7 +44,7 @@ Once an area on the Anomaly Rate chart is highlighted netdata will append a "hea ## Embedded Anomaly Rate Charts -Charts in both the [Overview](https://learn.netdata.cloud/docs/cloud/visualize/overview) and [single node dashboard](https://learn.netdata.cloud/docs/cloud/visualize/overview#jump-to-single-node-dashboards) tabs also expose the underlying anomaly rates for each dimension so users can easily see if the raw metrics are considered anomalous or not by Netdata. +Charts in both the [Overview](https://github.com/netdata/netdata/blob/master/docs/cloud/visualize/overview.md) and [single node dashboard](https://github.com/netdata/netdata/blob/master/docs/cloud/visualize/overview.md#jump-to-single-node-dashboards) tabs also expose the underlying anomaly rates for each dimension so users can easily see if the raw metrics are considered anomalous or not by Netdata. Pressing the anomalies icon (next to the information icon in the chart header) will expand the anomaly rate chart to make it easy to see how the anomaly rate for any individual dimension corresponds to the raw underlying data. In the example below we can see that the spike in `system.pgpgio|in` corresponded in the anomaly rate for that dimension jumping to 100% for a small period of time until the spike passed. @@ -65,9 +65,9 @@ You can see some example ML based alert configurations below: Check out the resources below to learn more about how Netdata is approaching ML: -- [Agent ML documentation](https://learn.netdata.cloud/docs/agent/ml). -- [Anomaly Advisor documentation](https://learn.netdata.cloud/docs/cloud/insights/anomaly-advisor). -- [Metric Correlations documentation](https://learn.netdata.cloud/docs/cloud/insights/metric-correlations). +- [Agent ML documentation](https://github.com/netdata/netdata/blob/master/ml/README.md). +- [Anomaly Advisor documentation](https://github.com/netdata/netdata/blob/master/docs/cloud/insights/anomaly-advisor.mdx). +- [Metric Correlations documentation](https://github.com/netdata/netdata/blob/master/docs/cloud/insights/metric-correlations.md). - Anomaly Advisor [launch blog post](https://www.netdata.cloud/blog/introducing-anomaly-advisor-unsupervised-anomaly-detection-in-netdata/). - Netdata Approach to ML [blog post](https://www.netdata.cloud/blog/our-approach-to-machine-learning/). - `areal/ml` related [GitHub Discussions](https://github.com/netdata/netdata/discussions?discussions_q=label%3Aarea%2Fml). diff --git a/docs/guides/monitor/dimension-templates.md b/docs/guides/monitor/dimension-templates.md index 539127366c..d2795a9c60 100644 --- a/docs/guides/monitor/dimension-templates.md +++ b/docs/guides/monitor/dimension-templates.md @@ -8,24 +8,27 @@ custom_edit_url: https://github.com/netdata/netdata/edit/master/docs/guides/moni Your ability to monitor the health of your systems and applications relies on your ability to create and maintain the best set of alarms for your particular needs. -In v1.18 of Netdata, we introduced **dimension templates** for alarms, which simplifies the process of writing [alarm -entities](/health/REFERENCE.md#health-entity-reference) for charts with many dimensions. +In v1.18 of Netdata, we introduced **dimension templates** for alarms, which simplifies the process of +writing [alarm entities](https://github.com/netdata/netdata/blob/master/health/REFERENCE.md#health-entity-reference) for +charts with many dimensions. Dimension templates can condense many individual entities into one—no more copy-pasting one entity and changing the `alarm`/`template` and `lookup` lines for each dimension you'd like to monitor. They are, however, an advanced health monitoring feature. For more basic instructions on creating your first alarm, -check out our [health monitoring documentation](/health/README.md), which also includes -[examples](/health/REFERENCE.md#example-alarms). +check out our [health monitoring documentation](https://github.com/netdata/netdata/blob/master/health/README.md), which also includes +[examples](https://github.com/netdata/netdata/blob/master/health/REFERENCE.md#example-alarms). ## The fundamentals of `foreach` -Our dimension templates update creates a new `foreach` parameter to the existing [`lookup` -line](/health/REFERENCE.md#alarm-line-lookup). This is where the magic happens. +Our dimension templates update creates a new `foreach` parameter to the +existing [`lookup` line](https://github.com/netdata/netdata/blob/master/health/REFERENCE.md#alarm-line-lookup). This +is where the magic happens. You use the `foreach` parameter to specify which dimensions you want to monitor with this single alarm. You can separate -them with a comma (`,`) or a pipe (`|`). You can also use a [Netdata simple pattern](/libnetdata/simple_pattern/README.md) -to create many alarms with a regex-like syntax. +them with a comma (`,`) or a pipe (`|`). You can also use +a [Netdata simple pattern](https://github.com/netdata/netdata/blob/master/libnetdata/simple_pattern/README.md) to create +many alarms with a regex-like syntax. The `foreach` parameter _has_ to be the last parameter in your `lookup` line, and if you have both `of` and `foreach` in the same `lookup` line, Netdata will ignore the `of` parameter and use `foreach` instead. @@ -95,7 +98,7 @@ Let's look at some other examples of how `foreach` works so you can best apply i In the last example, we used `foreach system,user,nice` to create three distinct alarms using dimension templates. But what if you want to quickly create alarms for _all_ the dimensions of a given chart? -Use a [simple pattern](/libnetdata/simple_pattern/README.md)! One example of a simple pattern is a single wildcard +Use a [simple pattern](https://github.com/netdata/netdata/blob/master/libnetdata/simple_pattern/README.md)! One example of a simple pattern is a single wildcard (`*`). Instead of monitoring system CPU usage, let's monitor per-application CPU usage using the `apps.cpu` chart. Passing a @@ -113,14 +116,15 @@ lookup: average -10m percentage foreach * This entity will now create alarms for every dimension in the `apps.cpu` chart. Given that most `apps.cpu` charts have 10 or more dimensions, using the wildcard ensures you catch every CPU-hogging process. -To learn more about how to use simple patterns with dimension templates, see our [simple patterns -documentation](/libnetdata/simple_pattern/README.md). +To learn more about how to use simple patterns with dimension templates, see +our [simple patterns documentation](https://github.com/netdata/netdata/blob/master/libnetdata/simple_pattern/README.md). ## Using `foreach` with alarm templates -Dimension templates also work with [alarm templates](/health/REFERENCE.md#alarm-line-alarm-or-template). Alarm -templates help you create alarms for all the charts with a given context—for example, all the cores of your system's -CPU. +Dimension templates also work +with [alarm templates](https://github.com/netdata/netdata/blob/master/health/REFERENCE.md#alarm-line-alarm-or-template). +Alarm templates help you create alarms for all the charts with a given context—for example, all the cores of your +system's CPU. By combining the two, you can create dozens of individual alarms with a single template entity. Here's how you would create alarms for the `system`, `user`, and `nice` dimensions for every chart in the `cpu.cpu` context—or, in other @@ -170,7 +174,8 @@ alarms that will help you better monitor the health of your systems. Or, at the very least, simplify your configuration files. -For information about other advanced features in Netdata's health monitoring toolkit, check out our [health -documentation](/health/README.md). And if you have some cool alarms you built using dimension templates, +For information about other advanced features in Netdata's health monitoring toolkit, check out +our [health documentation](https://github.com/netdata/netdata/blob/master/health/README.md). And if you have some cool +alarms you built using dimension templates, diff --git a/docs/guides/monitor/kubernetes-k8s-netdata.md b/docs/guides/monitor/kubernetes-k8s-netdata.md index 5cfefe8923..5732fc96c6 100644 --- a/docs/guides/monitor/kubernetes-k8s-netdata.md +++ b/docs/guides/monitor/kubernetes-k8s-netdata.md @@ -46,7 +46,7 @@ To follow this tutorial, you need: - A free Netdata Cloud account. [Sign up](https://app.netdata.cloud/sign-up?cloudRoute=/spaces) if you don't have one already. - A working cluster running Kubernetes v1.9 or newer, with a Netdata deployment and connected parent/child nodes. See - our [Kubernetes deployment process](/packaging/installer/methods/kubernetes.md) for details on deployment and + our [Kubernetes deployment process](https://github.com/netdata/netdata/blob/master/packaging/installer/methods/kubernetes.md) for details on deployment and conneting to Cloud. - The [`kubectl`](https://kubernetes.io/docs/reference/kubectl/overview/) command line tool, within [one minor version difference](https://kubernetes.io/docs/tasks/tools/install-kubectl/#before-you-begin) of your cluster, on an @@ -104,7 +104,7 @@ To get started, [sign in](https://app.netdata.cloud/sign-in?cloudRoute=/spaces) to the War Room you connected your cluster to, if not **General**. Netdata Cloud is already visualizing your Kubernetes metrics, streamed in real-time from each node, in the -[Overview](https://learn.netdata.cloud/docs/cloud/visualize/overview): +[Overview](https://github.com/netdata/netdata/blob/master/docs/cloud/visualize/overview.md):  @@ -126,8 +126,8 @@ cluster](https://user-images.githubusercontent.com/1153921/109042169-19c8fa00-76 For example, the chart above shows a spike in the CPU utilization from `rabbitmq` every minute or so, along with a baseline CPU utilization of 10-15% across the cluster. -Read about the [Overview](https://learn.netdata.cloud/docs/cloud/visualize/overview) and some best practices on [viewing -an overview of your infrastructure](/docs/visualize/overview-infrastructure.md) for details on using composite charts to +Read about the [Overview](https://github.com/netdata/netdata/blob/master/docs/cloud/visualize/overview.md) and some best practices on [viewing +an overview of your infrastructure](https://github.com/netdata/netdata/blob/master/docs/visualize/overview-infrastructure.md) for details on using composite charts to drill down into per-node performance metrics. ## Pod and container metrics @@ -154,7 +154,7 @@ Let's explore the most colorful box by hovering over it. container](https://user-images.githubusercontent.com/1153921/109049544-a8417980-7695-11eb-80a7-109b4a645a27.png) The **Context** tab shows `rabbitmq-5bb66bb6c9-6xr5b` as the container's image name, which means this container is -running a [RabbitMQ](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/rabbitmq) workload. +running a [RabbitMQ](https://github.com/netdata/go.d.plugin/blob/master/modules/rabbitmq/README.md) workload. Click the **Metrics** tab to see real-time metrics from that container. Unsurprisingly, it shows a spike in CPU utilization at regular intervals. @@ -173,7 +173,7 @@ different namespaces.  -Each composite chart has a [definition bar](https://learn.netdata.cloud/docs/cloud/visualize/overview#definition-bar) +Each composite chart has a [definition bar](https://github.com/netdata/netdata/blob/master/docs/cloud/visualize/overview.md#definition-bar) for complete customization. For example, grouping the top chart by `k8s_container_name` reveals new information.  @@ -183,20 +183,20 @@ for complete customization. For example, grouping the top chart by `k8s_containe Netdata has a [service discovery plugin](https://github.com/netdata/agent-service-discovery), which discovers and creates configuration files for [compatible services](https://github.com/netdata/helmchart#service-discovery-and-supported-services) and any endpoints covered by -our [generic Prometheus collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/prometheus). +our [generic Prometheus collector](https://github.com/netdata/go.d.plugin/blob/master/modules/prometheus/README.md). Netdata uses these files to collect metrics from any compatible application as they run _inside_ of a pod. Service discovery happens without manual intervention as pods are created, destroyed, or moved between nodes. Service metrics show up on the Overview as well, beneath the **Kubernetes** section, and are labeled according to the service in question. For example, the **RabbitMQ** section has numerous charts from the [`rabbitmq` -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/rabbitmq): +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/rabbitmq/README.md):  > The robot-shop cluster has more supported services, such as MySQL, which are not visible with zero configuration. This > is usually because of services running on non-default ports, using non-default names, or required passwords. Read up -> on [configuring service discovery](/packaging/installer/methods/kubernetes.md#configure-service-discovery) to collect +> on [configuring service discovery](https://github.com/netdata/netdata/blob/master/packaging/installer/methods/kubernetes.md#configure-service-discovery) to collect > more service metrics. Service metrics are essential to infrastructure monitoring, as they're the best indicator of the end-user experience, @@ -210,7 +210,7 @@ Netdata also automatically collects metrics from two essential Kubernetes proces The **k8s kubelet** section visualizes metrics from the Kubernetes agent responsible for managing every pod on a given node. This also happens without any configuration thanks to the [kubelet -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/k8s_kubelet). +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/k8s_kubelet/README.md). Monitoring each node's kubelet can be invaluable when diagnosing issues with your Kubernetes cluster. For example, you can see if the number of running containers/pods has dropped, which could signal a fault or crash in a particular @@ -226,7 +226,7 @@ configuration-related errors, and the actual vs. desired numbers of volumes, plu The **k8s kube-proxy** section displays metrics about the network proxy that runs on each node in your Kubernetes cluster. kube-proxy lets pods communicate with each other and accept sessions from outside your cluster. Its metrics are collected by the [kube-proxy -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/k8s_kubeproxy). +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/k8s_kubeproxy/README.md). With Netdata, you can monitor how often your k8s proxies are syncing proxy rules between nodes. Dramatic changes in these figures could indicate an anomaly in your cluster that's worthy of further investigation. @@ -246,9 +246,9 @@ clusters of all sizes. - [Netdata Helm chart](https://github.com/netdata/helmchart) - [Netdata service discovery](https://github.com/netdata/agent-service-discovery) - [Netdata Agent · `kubelet` - collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/k8s_kubelet) + collector](https://github.com/netdata/go.d.plugin/blob/master/modules/k8s_kubelet/README.md) - [Netdata Agent · `kube-proxy` - collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/k8s_kubeproxy) -- [Netdata Agent · `cgroups.plugin`](/collectors/cgroups.plugin/README.md) + collector](https://github.com/netdata/go.d.plugin/blob/master/modules/k8s_kubeproxy/README.md) +- [Netdata Agent · `cgroups.plugin`](https://github.com/netdata/netdata/blob/master/collectors/cgroups.plugin/README.md) diff --git a/docs/guides/monitor/lamp-stack.md b/docs/guides/monitor/lamp-stack.md index 29b35e142b..165888c4bf 100644 --- a/docs/guides/monitor/lamp-stack.md +++ b/docs/guides/monitor/lamp-stack.md @@ -58,7 +58,7 @@ To follow this tutorial, you need: ## Install the Netdata Agent If you don't have the free, open-source Netdata monitoring agent installed on your node yet, get started with a [single -kickstart command](/docs/get-started.mdx): +kickstart command](https://github.com/netdata/netdata/blob/master/docs/get-started.mdx): <OneLineInstallWget/> @@ -68,15 +68,15 @@ replacing `NODE` with the hostname or IP address of your system. ## Enable hardware and Linux system monitoring -There's nothing you need to do to enable [system monitoring](/docs/collect/system-metrics.md) and Linux monitoring with +There's nothing you need to do to enable [system monitoring](https://github.com/netdata/netdata/blob/master/docs/collect/system-metrics.md) and Linux monitoring with the Netdata Agent, which autodetects metrics from CPUs, memory, disks, networking devices, and Linux processes like systemd without any configuration. If you're using containers, Netdata automatically collects resource utilization -metrics from each using the [cgroups data collector](/collectors/cgroups.plugin/README.md). +metrics from each using the [cgroups data collector](https://github.com/netdata/netdata/blob/master/collectors/cgroups.plugin/README.md). ## Enable Apache monitoring Let's begin by configuring Apache to work with Netdata's [Apache data -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/apache). +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/apache/README.md). Actually, there's nothing for you to do to enable Apache monitoring with Netdata. @@ -87,7 +87,7 @@ metrics](https://httpd.apache.org/docs/2.4/mod/mod_status.html), which is just _ ## Enable web log monitoring The Netdata Agent also comes with a [web log -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/weblog), which reads Apache's access +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/weblog/README.md), which reads Apache's access log file, processes each line, and converts them into per-second metrics. On Debian systems, it reads the file at `/var/log/apache2/access.log`. @@ -100,7 +100,7 @@ monitoring. Because your MySQL database is password-protected, you do need to tell MySQL to allow the `netdata` user to connect to without a password. Netdata's [MySQL data -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/mysql) collects metrics in _read-only_ +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/mysql/README.md) collects metrics in _read-only_ mode, without being able to alter or affect operations in any way. First, log into the MySQL shell. Then, run the following three commands, one at a time: @@ -112,15 +112,15 @@ FLUSH PRIVILEGES; ``` Run `sudo systemctl restart netdata`, or the [appropriate alternative for your -system](/docs/configure/start-stop-restart.md), to collect dozens of metrics every second for robust MySQL monitoring. +system](https://github.com/netdata/netdata/blob/master/docs/configure/start-stop-restart.md), to collect dozens of metrics every second for robust MySQL monitoring. ## Enable PHP monitoring Unlike Apache or MySQL, PHP isn't a service that you can monitor directly, unless you instrument a PHP-based application -with [StatsD](/collectors/statsd.plugin/README.md). +with [StatsD](https://github.com/netdata/netdata/blob/master/collectors/statsd.plugin/README.md). However, if you use [PHP-FPM](https://php-fpm.org/) in your LAMP stack, you can monitor that process with our [PHP-FPM -data collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/phpfpm). +data collector](https://github.com/netdata/go.d.plugin/blob/master/modules/phpfpm/README.md). Open your PHP-FPM configuration for editing, replacing `7.4` with your version of PHP: @@ -166,12 +166,12 @@ If the Netdata Agent isn't already open in your browser, open a new tab and navi > If you [signed up](https://app.netdata.cloud/sign-up?cloudRoute=/spaces) for Netdata Cloud earlier, you can also view > the exact same LAMP stack metrics there, plus additional features, like drag-and-drop custom dashboards. Be sure to -> [connecting your node](/claim/README.md) to start streaming metrics to your browser through Netdata Cloud. +> [connecting your node](https://github.com/netdata/netdata/blob/master/claim/README.md) to start streaming metrics to your browser through Netdata Cloud. Netdata automatically organizes all metrics and charts onto a single page for easy navigation. Peek at gauges to see overall system performance, then scroll down to see more. Click-and-drag with your mouse to pan _all_ charts back and forth through different time intervals, or hold `SHIFT` and use the scrollwheel (or two-finger scroll) to zoom in and -out. Check out our doc on [interacting with charts](/docs/visualize/interact-dashboards-charts.md) for all the details. +out. Check out our doc on [interacting with charts](https://github.com/netdata/netdata/blob/master/docs/visualize/interact-dashboards-charts.md) for all the details.  @@ -205,15 +205,15 @@ Here's a quick reference for what charts you might want to focus on after settin The Netdata Agent comes with hundreds of pre-configured alarms to help you keep tabs on your system, including 19 alarms designed for smarter LAMP stack monitoring. -Click the 🔔 icon in the top navigation to [see active alarms](/docs/monitor/view-active-alarms.md). The **Active** tabs +Click the 🔔 icon in the top navigation to [see active alarms](https://github.com/netdata/netdata/blob/master/docs/monitor/view-active-alarms.md). The **Active** tabs shows any alarms currently triggered, while the **All** tab displays a list of _every_ pre-configured alarm. The  -[Tweak alarms](/docs/monitor/configure-alarms.md) based on your infrastructure monitoring needs, and to see these alarms +[Tweak alarms](https://github.com/netdata/netdata/blob/master/docs/monitor/configure-alarms.md) based on your infrastructure monitoring needs, and to see these alarms in other places, like your inbox or a Slack channel, [enable a notification -method](/docs/monitor/enable-notifications.md). +method](https://github.com/netdata/netdata/blob/master/docs/monitor/enable-notifications.md). ## What's next? @@ -223,7 +223,7 @@ services. The per-second metrics granularity means you have the most accurate in any LAMP-related issues. Another powerful way to monitor the availability of a LAMP stack is the [`httpcheck` -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/httpcheck), which pings a web server at +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/httpcheck/README.md), which pings a web server at a regular interval and tells you whether if and how quickly it's responding. The `response_match` option also lets you monitor when the web server's response isn't what you expect it to be, which might happen if PHP-FPM crashes, for example. @@ -233,14 +233,14 @@ we're not covering it here, but it _does_ work in a single-node setup. Just don' node crashed. If you're planning on managing more than one node, or want to take advantage of advanced features, like finding the -source of issues faster with [Metric Correlations](https://learn.netdata.cloud/docs/cloud/insights/metric-correlations), +source of issues faster with [Metric Correlations](https://github.com/netdata/netdata/blob/master/docs/cloud/insights/metric-correlations.md), [sign up](https://app.netdata.cloud/sign-up?cloudRoute=/spaces) for a free Netdata Cloud account. ### Related reference documentation -- [Netdata Agent · Get started](/docs/get-started.mdx) -- [Netdata Agent · Apache data collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/apache) -- [Netdata Agent · Web log collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/weblog) -- [Netdata Agent · MySQL data collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/mysql) -- [Netdata Agent · PHP-FPM data collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/phpfpm) +- [Netdata Agent · Get started](https://github.com/netdata/netdata/blob/master/docs/get-started.mdx) +- [Netdata Agent · Apache data collector](https://github.com/netdata/go.d.plugin/blob/master/modules/apache/README.md) +- [Netdata Agent · Web log collector](https://github.com/netdata/go.d.plugin/blob/master/modules/weblog/README.md) +- [Netdata Agent · MySQL data collector](https://github.com/netdata/go.d.plugin/blob/master/modules/mysql/README.md) +- [Netdata Agent · PHP-FPM data collector](https://github.com/netdata/go.d.plugin/blob/master/modules/phpfpm/README.md) diff --git a/docs/guides/monitor/pi-hole-raspberry-pi.md b/docs/guides/monitor/pi-hole-raspberry-pi.md index 1246d8ba12..5099d12b95 100644 --- a/docs/guides/monitor/pi-hole-raspberry-pi.md +++ b/docs/guides/monitor/pi-hole-raspberry-pi.md @@ -79,7 +79,7 @@ service](https://discourse.pi-hole.net/t/how-do-i-configure-my-devices-to-use-pi finished setting up Pi-hole at this point. As far as configuring Netdata to monitor Pi-hole metrics, there's nothing you actually need to do. Netdata's [Pi-hole -collector](https://learn.netdata.cloud/docs/agent/collectors/go.d.plugin/modules/pihole) will autodetect the new service +collector](https://github.com/netdata/go.d.plugin/blob/master/modules/pihole/README.md) will autodetect the new service running on your Raspberry Pi and immediately start collecting metrics every second. Restart Netdata with `sudo systemctl restart netdata`, which will then recognize that Pi-hole is running and start a @@ -98,15 +98,15 @@ part of your system might affect another.  -If you're completely new to Netdata, look at our [step- |